Consent Manager Tag v2.0 (for TCF 2.0) -->

Farnell PDF

Moving Average Filters Chapitre 15 - Analog Devices

Moving Average Filters Chapitre 15 - Analog Devices

Moving Average Filters Chapitre 15 - Analog Devices

- Revenir à l'accueil

Farnell Element 14 :

See the trailer for the next exciting episode of The Ben Heck show. Check back on Friday to be among the first to see the exclusive full show on element…

Connect your Raspberry Pi to a breadboard, download some code and create a push-button audio play project.

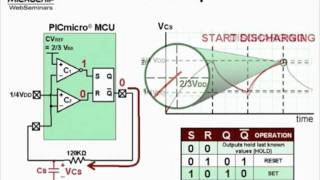

Puce électronique / Microchip :

Sans fil - Wireless :

Texas instrument :

Ordinateurs :

Logiciels :

Tutoriels :

Autres documentations :

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-NA555-NE555-..> 08-Sep-2014 07:33 1.5M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-AD9834-Rev-D..> 08-Sep-2014 07:32 1.2M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-MSP430F15x-M..> 08-Sep-2014 07:32 1.3M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-AD736-Rev-I-..> 08-Sep-2014 07:31 1.3M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-AD8307-Data-..> 08-Sep-2014 07:30 1.3M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Single-Chip-..> 08-Sep-2014 07:30 1.5M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Quadruple-2-..> 08-Sep-2014 07:29 1.5M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-ADE7758-Rev-..> 08-Sep-2014 07:28 1.7M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-MAX3221-Rev-..> 08-Sep-2014 07:28 1.8M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-USB-to-Seria..> 08-Sep-2014 07:27 2.0M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-AD8313-Analo..> 08-Sep-2014 07:26 2.0M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-SN54HC164-SN..> 08-Sep-2014 07:25 2.0M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-AD8310-Analo..> 08-Sep-2014 07:24 2.1M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-AD8361-Rev-D..> 08-Sep-2014 07:23 2.1M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-2N3906-Fairc..> 08-Sep-2014 07:22 2.1M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-AD584-Rev-C-..> 08-Sep-2014 07:20 2.2M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-ADE7753-Rev-..> 08-Sep-2014 07:20 2.3M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-TLV320AIC23B..> 08-Sep-2014 07:18 2.4M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-AD586BRZ-Ana..> 08-Sep-2014 07:17 1.6M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-STM32F405xxS..> 27-Aug-2014 18:27 1.8M

Farnell-MSP430-Hardw..> 29-Jul-2014 10:36 1.1M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-LM324-Texas-..> 29-Jul-2014 10:32 1.5M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-LM386-Low-Vo..> 29-Jul-2014 10:32 1.5M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-NE5532-Texas..> 29-Jul-2014 10:32 1.5M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Hex-Inverter..> 29-Jul-2014 10:31 875K

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-AT90USBKey-H..> 29-Jul-2014 10:31 902K

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-AT89C5131-Ha..> 29-Jul-2014 10:31 1.2M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-MSP-EXP430F5..> 29-Jul-2014 10:31 1.2M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Explorer-16-..> 29-Jul-2014 10:31 1.3M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-TMP006EVM-Us..> 29-Jul-2014 10:30 1.3M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Gertboard-Us..> 29-Jul-2014 10:30 1.4M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-LMP91051-Use..> 29-Jul-2014 10:30 1.4M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Thermometre-..> 29-Jul-2014 10:30 1.4M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-user-manuel-..> 29-Jul-2014 10:29 1.5M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-fx-3650P-fx-..> 29-Jul-2014 10:29 1.5M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-2-GBPS-Diffe..> 28-Jul-2014 17:42 2.7M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-LMT88-2.4V-1..> 28-Jul-2014 17:42 2.8M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Octal-Genera..> 28-Jul-2014 17:42 2.8M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Dual-MOSFET-..> 28-Jul-2014 17:41 2.8M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-TLV320AIC325..> 28-Jul-2014 17:41 2.9M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-SN54LV4053A-..> 28-Jul-2014 17:20 5.9M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-TAS1020B-USB..> 28-Jul-2014 17:19 6.2M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-TPS40060-Wid..> 28-Jul-2014 17:19 6.3M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-TL082-Wide-B..> 28-Jul-2014 17:16 6.3M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-RF-short-tra..> 28-Jul-2014 17:16 6.3M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-maxim-integr..> 28-Jul-2014 17:14 6.4M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-TSV6390-TSV6..> 28-Jul-2014 17:14 6.4M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Fast-Charge-..> 28-Jul-2014 17:12 6.4M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-NVE-datashee..> 28-Jul-2014 17:12 6.5M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Excalibur-Hi..> 28-Jul-2014 17:10 2.4M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Excalibur-Hi..> 28-Jul-2014 17:10 2.4M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-REF102-10V-P..> 28-Jul-2014 17:09 2.4M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-TMS320F28055..> 28-Jul-2014 17:09 2.7M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-MULTICOMP-Ra..> 22-Jul-2014 12:35 5.9M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-RASPBERRY-PI..> 22-Jul-2014 12:35 5.9M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Dremel-Exper..> 22-Jul-2014 12:34 1.6M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-STM32F103x8-..> 22-Jul-2014 12:33 1.6M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-BD6xxx-PDF.htm 22-Jul-2014 12:33 1.6M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-L78S-STMicro..> 22-Jul-2014 12:32 1.6M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-RaspiCam-Doc..> 22-Jul-2014 12:32 1.6M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-SB520-SB5100..> 22-Jul-2014 12:32 1.6M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-iServer-Micr..> 22-Jul-2014 12:32 1.6M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-LUMINARY-MIC..> 22-Jul-2014 12:31 3.6M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-TEXAS-INSTRU..> 22-Jul-2014 12:31 2.4M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-TEXAS-INSTRU..> 22-Jul-2014 12:30 4.6M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-CLASS 1-or-2..> 22-Jul-2014 12:30 4.7M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-TEXAS-INSTRU..> 22-Jul-2014 12:29 4.8M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Evaluating-t..> 22-Jul-2014 12:28 4.9M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-LM3S6952-Mic..> 22-Jul-2014 12:27 5.9M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Keyboard-Mou..> 22-Jul-2014 12:27 5.9M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif) Farnell-Full-Datashe..> 15-Jul-2014 17:08 951K

Farnell-Full-Datashe..> 15-Jul-2014 17:08 951K

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-pmbta13_pmbt..> 15-Jul-2014 17:06 959K

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-EE-SPX303N-4..> 15-Jul-2014 17:06 969K

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Datasheet-NX..> 15-Jul-2014 17:06 1.0M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Datasheet-Fa..> 15-Jul-2014 17:05 1.0M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-MIDAS-un-tra..> 15-Jul-2014 17:05 1.0M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-SERIAL-TFT-M..> 15-Jul-2014 17:05 1.0M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-MCOC1-Farnel..> 15-Jul-2014 17:05 1.0M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-TMR-2-series..> 15-Jul-2014 16:48 787K

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-DC-DC-Conver..> 15-Jul-2014 16:48 781K

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Full-Datashe..> 15-Jul-2014 16:47 803K

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-TMLM-Series-..> 15-Jul-2014 16:47 810K

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-TEL-5-Series..> 15-Jul-2014 16:47 814K

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-TXL-series-t..> 15-Jul-2014 16:47 829K

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-TEP-150WI-Se..> 15-Jul-2014 16:47 837K

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-AC-DC-Power-..> 15-Jul-2014 16:47 845K

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-TIS-Instruct..> 15-Jul-2014 16:47 845K

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-TOS-tracopow..> 15-Jul-2014 16:47 852K

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-TCL-DC-traco..> 15-Jul-2014 16:46 858K

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-TIS-series-t..> 15-Jul-2014 16:46 875K

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-TMR-2-Series..> 15-Jul-2014 16:46 897K

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-TMR-3-WI-Ser..> 15-Jul-2014 16:46 939K

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-TEN-8-WI-Ser..> 15-Jul-2014 16:46 939K

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Full-Datashe..> 15-Jul-2014 16:46 947K

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-HIP4081A-Int..> 07-Jul-2014 19:47 1.0M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-ISL6251-ISL6..> 07-Jul-2014 19:47 1.1M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-DG411-DG412-..> 07-Jul-2014 19:47 1.0M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-3367-ARALDIT..> 07-Jul-2014 19:46 1.2M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-ICM7228-Inte..> 07-Jul-2014 19:46 1.1M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Data-Sheet-K..> 07-Jul-2014 19:46 1.2M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Silica-Gel-M..> 07-Jul-2014 19:46 1.2M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-TKC2-Dusters..> 07-Jul-2014 19:46 1.2M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-CRC-HANDCLEA..> 07-Jul-2014 19:46 1.2M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-760G-French-..> 07-Jul-2014 19:45 1.2M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Decapant-KF-..> 07-Jul-2014 19:45 1.2M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-1734-ARALDIT..> 07-Jul-2014 19:45 1.2M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Araldite-Fus..> 07-Jul-2014 19:45 1.2M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-fiche-de-don..> 07-Jul-2014 19:44 1.4M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-safety-data-..> 07-Jul-2014 19:44 1.4M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-A-4-Hardener..> 07-Jul-2014 19:44 1.4M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-CC-Debugger-..> 07-Jul-2014 19:44 1.5M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-MSP430-Hardw..> 07-Jul-2014 19:43 1.8M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-SmartRF06-Ev..> 07-Jul-2014 19:43 1.6M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-CC2531-USB-H..> 07-Jul-2014 19:43 1.8M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Alimentation..> 07-Jul-2014 19:43 1.8M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-BK889B-PONT-..> 07-Jul-2014 19:42 1.8M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-User-Guide-M..> 07-Jul-2014 19:41 2.0M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-T672-3000-Se..> 07-Jul-2014 19:41 2.0M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif) Farnell-0050375063-D..> 18-Jul-2014 17:03 2.5M

Farnell-0050375063-D..> 18-Jul-2014 17:03 2.5M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Mini-Fit-Jr-..> 18-Jul-2014 17:03 2.5M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-43031-0002-M..> 18-Jul-2014 17:03 2.5M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-0433751001-D..> 18-Jul-2014 17:02 2.5M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Cube-3D-Prin..> 18-Jul-2014 17:02 2.5M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-MTX-Compact-..> 18-Jul-2014 17:01 2.5M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-MTX-3250-MTX..> 18-Jul-2014 17:01 2.5M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-ATtiny26-L-A..> 18-Jul-2014 17:00 2.6M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-MCP3421-Micr..> 18-Jul-2014 17:00 1.2M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-LM19-Texas-I..> 18-Jul-2014 17:00 1.2M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Data-Sheet-S..> 18-Jul-2014 17:00 1.2M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-LMH6518-Texa..> 18-Jul-2014 16:59 1.3M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-AD7719-Low-V..> 18-Jul-2014 16:59 1.4M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-DAC8143-Data..> 18-Jul-2014 16:59 1.5M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-BGA7124-400-..> 18-Jul-2014 16:59 1.5M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-SICK-OPTIC-E..> 18-Jul-2014 16:58 1.5M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-LT3757-Linea..> 18-Jul-2014 16:58 1.6M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-LT1961-Linea..> 18-Jul-2014 16:58 1.6M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-PIC18F2420-2..> 18-Jul-2014 16:57 2.5M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-DS3231-DS-PD..> 18-Jul-2014 16:57 2.5M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-RDS-80-PDF.htm 18-Jul-2014 16:57 1.3M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-AD8300-Data-..> 18-Jul-2014 16:56 1.3M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-LT6233-Linea..> 18-Jul-2014 16:56 1.3M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-MAX1365-MAX1..> 18-Jul-2014 16:56 1.4M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-XPSAF5130-PD..> 18-Jul-2014 16:56 1.4M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-DP83846A-DsP..> 18-Jul-2014 16:55 1.5M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Dremel-Exper..> 18-Jul-2014 16:55 1.6M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-MCOC1-Farnel..> 16-Jul-2014 09:04 1.0M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-SL3S1203_121..> 16-Jul-2014 09:04 1.1M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-PN512-Full-N..> 16-Jul-2014 09:03 1.4M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-SL3S4011_402..> 16-Jul-2014 09:03 1.1M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-LPC408x-7x 3..> 16-Jul-2014 09:03 1.6M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-PCF8574-PCF8..> 16-Jul-2014 09:03 1.7M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-LPC81xM-32-b..> 16-Jul-2014 09:02 2.0M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-LPC1769-68-6..> 16-Jul-2014 09:02 1.9M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Download-dat..> 16-Jul-2014 09:02 2.2M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-LPC3220-30-4..> 16-Jul-2014 09:02 2.2M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-LPC11U3x-32-..> 16-Jul-2014 09:01 2.4M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-SL3ICS1002-1..> 16-Jul-2014 09:01 2.5M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-T672-3000-Se..> 08-Jul-2014 18:59 2.0M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-tesa®pack63..> 08-Jul-2014 18:56 2.0M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Encodeur-USB..> 08-Jul-2014 18:56 2.0M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-CC2530ZDK-Us..> 08-Jul-2014 18:55 2.1M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-2020-Manuel-..> 08-Jul-2014 18:55 2.1M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Synchronous-..> 08-Jul-2014 18:54 2.1M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Arithmetic-L..> 08-Jul-2014 18:54 2.1M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-NA555-NE555-..> 08-Jul-2014 18:53 2.2M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-4-Bit-Magnit..> 08-Jul-2014 18:53 2.2M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-LM555-Timer-..> 08-Jul-2014 18:53 2.2M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-L293d-Texas-..> 08-Jul-2014 18:53 2.2M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-SN54HC244-SN..> 08-Jul-2014 18:52 2.3M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-MAX232-MAX23..> 08-Jul-2014 18:52 2.3M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-High-precisi..> 08-Jul-2014 18:51 2.3M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-SMU-Instrume..> 08-Jul-2014 18:51 2.3M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-900-Series-B..> 08-Jul-2014 18:50 2.3M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-BA-Series-Oh..> 08-Jul-2014 18:50 2.3M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-UTS-Series-S..> 08-Jul-2014 18:49 2.5M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-270-Series-O..> 08-Jul-2014 18:49 2.3M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-UTS-Series-S..> 08-Jul-2014 18:49 2.8M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Tiva-C-Serie..> 08-Jul-2014 18:49 2.6M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-UTO-Souriau-..> 08-Jul-2014 18:48 2.8M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Clipper-Seri..> 08-Jul-2014 18:48 2.8M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-SOURIAU-Cont..> 08-Jul-2014 18:47 3.0M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-851-Series-P..> 08-Jul-2014 18:47 3.0M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif) Farnell-SL59830-Inte..> 06-Jul-2014 10:07 1.0M

Farnell-SL59830-Inte..> 06-Jul-2014 10:07 1.0M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-ALF1210-PDF.htm 06-Jul-2014 10:06 4.0M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-AD7171-16-Bi..> 06-Jul-2014 10:06 1.0M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Low-Noise-24..> 06-Jul-2014 10:05 1.0M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-ESCON-Featur..> 06-Jul-2014 10:05 938K

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-74LCX573-Fai..> 06-Jul-2014 10:05 1.9M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-1N4148WS-Fai..> 06-Jul-2014 10:04 1.9M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-FAN6756-Fair..> 06-Jul-2014 10:04 850K

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Datasheet-Fa..> 06-Jul-2014 10:04 861K

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-ES1F-ES1J-fi..> 06-Jul-2014 10:04 867K

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-QRE1113-Fair..> 06-Jul-2014 10:03 879K

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-2N7002DW-Fai..> 06-Jul-2014 10:03 886K

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-FDC2512-Fair..> 06-Jul-2014 10:03 886K

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-FDV301N-Digi..> 06-Jul-2014 10:03 886K

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-S1A-Fairchil..> 06-Jul-2014 10:03 896K

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-BAV99-Fairch..> 06-Jul-2014 10:03 896K

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-74AC00-74ACT..> 06-Jul-2014 10:03 911K

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-NaPiOn-Panas..> 06-Jul-2014 10:02 911K

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-LQ-RELAYS-AL..> 06-Jul-2014 10:02 924K

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-ev-relays-ae..> 06-Jul-2014 10:02 926K

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-ESCON-Featur..> 06-Jul-2014 10:02 931K

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Amplifier-In..> 06-Jul-2014 10:02 940K

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Serial-File-..> 06-Jul-2014 10:02 941K

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Both-the-Del..> 06-Jul-2014 10:01 948K

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Videk-PDF.htm 06-Jul-2014 10:01 948K

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-EPCOS-173438..> 04-Jul-2014 10:43 3.3M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Sensorless-C..> 04-Jul-2014 10:42 3.3M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-197.31-KB-Te..> 04-Jul-2014 10:42 3.3M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-PIC12F609-61..> 04-Jul-2014 10:41 3.7M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-PADO-semi-au..> 04-Jul-2014 10:41 3.7M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-03-iec-runds..> 04-Jul-2014 10:40 3.7M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-ACC-Silicone..> 04-Jul-2014 10:40 3.7M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Series-TDS10..> 04-Jul-2014 10:39 4.0M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-03-iec-runds..> 04-Jul-2014 10:40 3.7M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-0430300011-D..> 14-Jun-2014 18:13 2.0M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-06-6544-8-PD..> 26-Mar-2014 17:56 2.7M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-3M-Polyimide..> 21-Mar-2014 08:09 3.9M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-3M-VolitionT..> 25-Mar-2014 08:18 3.3M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-10BQ060-PDF.htm 14-Jun-2014 09:50 2.4M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-10TPB47M-End..> 14-Jun-2014 18:16 3.4M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-12mm-Size-In..> 14-Jun-2014 09:50 2.4M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-24AA024-24LC..> 23-Jun-2014 10:26 3.1M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-50A-High-Pow..> 20-Mar-2014 17:31 2.9M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-197.31-KB-Te..> 04-Jul-2014 10:42 3.3M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-1907-2006-PD..> 26-Mar-2014 17:56 2.7M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-5910-PDF.htm 25-Mar-2014 08:15 3.0M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-6517b-Electr..> 29-Mar-2014 11:12 3.3M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-A-True-Syste..> 29-Mar-2014 11:13 3.3M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-ACC-Silicone..> 04-Jul-2014 10:40 3.7M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-AD524-PDF.htm 20-Mar-2014 17:33 2.8M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-ADL6507-PDF.htm 14-Jun-2014 18:19 3.4M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-ADSP-21362-A..> 20-Mar-2014 17:34 2.8M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-ALF1210-PDF.htm 04-Jul-2014 10:39 4.0M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-ALF1225-12-V..> 01-Apr-2014 07:40 3.4M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-ALF2412-24-V..> 01-Apr-2014 07:39 3.4M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-AN10361-Phil..> 23-Jun-2014 10:29 2.1M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-ARADUR-HY-13..> 26-Mar-2014 17:55 2.8M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-ARALDITE-201..> 21-Mar-2014 08:12 3.7M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-ARALDITE-CW-..> 26-Mar-2014 17:56 2.7M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-ATMEL-8-bit-..> 19-Mar-2014 18:04 2.1M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-ATMEL-8-bit-..> 11-Mar-2014 07:55 2.1M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-ATmega640-VA..> 14-Jun-2014 09:49 2.5M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-ATtiny20-PDF..> 25-Mar-2014 08:19 3.6M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-ATtiny26-L-A..> 13-Jun-2014 18:40 1.8M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Alimentation..> 14-Jun-2014 18:24 2.5M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Alimentation..> 01-Apr-2014 07:42 3.4M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Amplificateu..> 29-Mar-2014 11:11 3.3M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-An-Improved-..> 14-Jun-2014 09:49 2.5M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Atmel-ATmega..> 19-Mar-2014 18:03 2.2M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Avvertenze-e..> 14-Jun-2014 18:20 3.3M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-BC846DS-NXP-..> 13-Jun-2014 18:42 1.6M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-BC847DS-NXP-..> 23-Jun-2014 10:24 3.3M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-BF545A-BF545..> 23-Jun-2014 10:28 2.1M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-BK2650A-BK26..> 29-Mar-2014 11:10 3.3M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-BT151-650R-N..> 13-Jun-2014 18:40 1.7M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-BTA204-800C-..> 13-Jun-2014 18:42 1.6M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-BUJD203AX-NX..> 13-Jun-2014 18:41 1.7M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-BYV29F-600-N..> 13-Jun-2014 18:42 1.6M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-BYV79E-serie..> 10-Mar-2014 16:19 1.6M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-BZX384-serie..> 23-Jun-2014 10:29 2.1M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Battery-GBA-..> 14-Jun-2014 18:13 2.0M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-C.A-6150-C.A..> 14-Jun-2014 18:24 2.5M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-C.A 8332B-C...> 01-Apr-2014 07:40 3.4M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-CC2560-Bluet..> 29-Mar-2014 11:14 2.8M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-CD4536B-Type..> 14-Jun-2014 18:13 2.0M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-CIRRUS-LOGIC..> 10-Mar-2014 17:20 2.1M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-CS5532-34-BS..> 01-Apr-2014 07:39 3.5M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Cannon-ZD-PD..> 11-Mar-2014 08:13 2.8M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Ceramic-tran..> 14-Jun-2014 18:19 3.4M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Circuit-Note..> 26-Mar-2014 18:00 2.8M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Circuit-Note..> 26-Mar-2014 18:00 2.8M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Cles-electro..> 21-Mar-2014 08:13 3.9M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Conception-d..> 11-Mar-2014 07:49 2.4M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Connectors-N..> 14-Jun-2014 18:12 2.1M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Construction..> 14-Jun-2014 18:25 2.5M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Controle-de-..> 11-Mar-2014 08:16 2.8M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Cordless-dri..> 14-Jun-2014 18:13 2.0M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Current-Tran..> 26-Mar-2014 17:58 2.7M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Current-Tran..> 26-Mar-2014 17:58 2.7M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Current-Tran..> 26-Mar-2014 17:59 2.7M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Current-Tran..> 26-Mar-2014 17:59 2.7M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-DC-Fan-type-..> 14-Jun-2014 09:48 2.5M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-DC-Fan-type-..> 14-Jun-2014 09:51 1.8M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Davum-TMC-PD..> 14-Jun-2014 18:27 2.4M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-De-la-puissa..> 29-Mar-2014 11:10 3.3M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Directive-re..> 25-Mar-2014 08:16 3.0M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Documentatio..> 14-Jun-2014 18:26 2.5M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Download-dat..> 13-Jun-2014 18:40 1.8M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-ECO-Series-T..> 20-Mar-2014 08:14 2.5M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-ELMA-PDF.htm 29-Mar-2014 11:13 3.3M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-EMC1182-PDF.htm 25-Mar-2014 08:17 3.0M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-EPCOS-173438..> 04-Jul-2014 10:43 3.3M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-EPCOS-Sample..> 11-Mar-2014 07:53 2.2M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-ES2333-PDF.htm 11-Mar-2014 08:14 2.8M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Ed.081002-DA..> 19-Mar-2014 18:02 2.5M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-F28069-Picco..> 14-Jun-2014 18:14 2.0M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-F42202-PDF.htm 19-Mar-2014 18:00 2.5M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-FDS-ITW-Spra..> 14-Jun-2014 18:22 3.3M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-FICHE-DE-DON..> 10-Mar-2014 16:17 1.6M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Fastrack-Sup..> 23-Jun-2014 10:25 3.3M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Ferric-Chlor..> 29-Mar-2014 11:14 2.8M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Fiche-de-don..> 14-Jun-2014 09:47 2.5M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Fiche-de-don..> 14-Jun-2014 18:26 2.5M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Fluke-1730-E..> 14-Jun-2014 18:23 2.5M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-GALVA-A-FROI..> 26-Mar-2014 17:56 2.7M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-GALVA-MAT-Re..> 26-Mar-2014 17:57 2.7M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-GN-RELAYS-AG..> 20-Mar-2014 08:11 2.6M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-HC49-4H-Crys..> 14-Jun-2014 18:20 3.3M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-HFE1600-Data..> 14-Jun-2014 18:22 3.3M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-HI-70300-Sol..> 14-Jun-2014 18:27 2.4M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-HUNTSMAN-Adv..> 10-Mar-2014 16:17 1.7M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Haute-vitess..> 11-Mar-2014 08:17 2.4M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-IP4252CZ16-8..> 13-Jun-2014 18:41 1.7M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Instructions..> 19-Mar-2014 18:01 2.5M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-KSZ8851SNL-S..> 23-Jun-2014 10:28 2.1M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-L-efficacite..> 11-Mar-2014 07:52 2.3M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-LCW-CQ7P.CC-..> 25-Mar-2014 08:19 3.2M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-LME49725-Pow..> 14-Jun-2014 09:49 2.5M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-LOCTITE-542-..> 25-Mar-2014 08:15 3.0M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-LOCTITE-3463..> 25-Mar-2014 08:19 3.0M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-LUXEON-Guide..> 11-Mar-2014 07:52 2.3M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Leaded-Trans..> 23-Jun-2014 10:26 3.2M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Les-derniers..> 11-Mar-2014 07:50 2.3M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Loctite3455-..> 25-Mar-2014 08:16 3.0M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Low-cost-Enc..> 13-Jun-2014 18:42 1.7M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Lubrifiant-a..> 26-Mar-2014 18:00 2.7M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-MC3510-PDF.htm 25-Mar-2014 08:17 3.0M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-MC21605-PDF.htm 11-Mar-2014 08:14 2.8M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-MCF532x-7x-E..> 29-Mar-2014 11:14 2.8M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-MICREL-KSZ88..> 11-Mar-2014 07:54 2.2M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-MICROCHIP-PI..> 19-Mar-2014 18:02 2.5M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-MOLEX-39-00-..> 10-Mar-2014 17:19 1.9M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-MOLEX-43020-..> 10-Mar-2014 17:21 1.9M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-MOLEX-43160-..> 10-Mar-2014 17:21 1.9M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-MOLEX-87439-..> 10-Mar-2014 17:21 1.9M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-MPXV7002-Rev..> 20-Mar-2014 17:33 2.8M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-MX670-MX675-..> 14-Jun-2014 09:46 2.5M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Microchip-MC..> 13-Jun-2014 18:27 1.8M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Microship-PI..> 11-Mar-2014 07:53 2.2M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Midas-Active..> 14-Jun-2014 18:17 3.4M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Midas-MCCOG4..> 14-Jun-2014 18:11 2.1M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Miniature-Ci..> 26-Mar-2014 17:55 2.8M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Mistral-PDF.htm 14-Jun-2014 18:12 2.1M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Molex-83421-..> 14-Jun-2014 18:17 3.4M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Molex-COMMER..> 14-Jun-2014 18:16 3.4M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Molex-Crimp-..> 10-Mar-2014 16:27 1.7M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Multi-Functi..> 20-Mar-2014 17:38 3.0M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-NTE_SEMICOND..> 11-Mar-2014 07:52 2.3M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-NXP-74VHC126..> 10-Mar-2014 16:17 1.6M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-NXP-BT136-60..> 11-Mar-2014 07:52 2.3M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-NXP-PBSS9110..> 10-Mar-2014 17:21 1.9M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-NXP-PCA9555 ..> 11-Mar-2014 07:54 2.2M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-NXP-PMBFJ620..> 10-Mar-2014 16:16 1.7M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-NXP-PSMN1R7-..> 10-Mar-2014 16:17 1.6M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-NXP-PSMN7R0-..> 10-Mar-2014 17:19 2.1M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-NXP-TEA1703T..> 11-Mar-2014 08:15 2.8M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Nilï¬-sk-E-..> 14-Jun-2014 09:47 2.5M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Novembre-201..> 20-Mar-2014 17:38 3.3M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-OMRON-Master..> 10-Mar-2014 16:26 1.8M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-OSLON-SSL-Ce..> 19-Mar-2014 18:03 2.1M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-OXPCIE958-FB..> 13-Jun-2014 18:40 1.8M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-PADO-semi-au..> 04-Jul-2014 10:41 3.7M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-PBSS5160T-60..> 19-Mar-2014 18:03 2.1M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-PDTA143X-ser..> 20-Mar-2014 08:12 2.6M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-PDTB123TT-NX..> 13-Jun-2014 18:43 1.5M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-PESD5V0F1BL-..> 13-Jun-2014 18:43 1.5M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-PESD9X5.0L-P..> 13-Jun-2014 18:43 1.6M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-PIC12F609-61..> 04-Jul-2014 10:41 3.7M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-PIC18F2455-2..> 23-Jun-2014 10:27 3.1M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-PIC24FJ256GB..> 14-Jun-2014 09:51 2.4M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-PMBT3906-PNP..> 13-Jun-2014 18:44 1.5M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-PMBT4403-PNP..> 23-Jun-2014 10:27 3.1M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-PMEG4002EL-N..> 14-Jun-2014 18:18 3.4M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-PMEG4010CEH-..> 13-Jun-2014 18:43 1.6M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Panasonic-15..> 23-Jun-2014 10:29 2.1M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Panasonic-EC..> 20-Mar-2014 17:36 2.6M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Panasonic-EZ..> 20-Mar-2014 08:10 2.6M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Panasonic-Id..> 20-Mar-2014 17:35 2.6M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Panasonic-Ne..> 20-Mar-2014 17:36 2.6M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Panasonic-Ra..> 20-Mar-2014 17:37 2.6M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Panasonic-TS..> 20-Mar-2014 08:12 2.6M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Panasonic-Y3..> 20-Mar-2014 08:11 2.6M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Pico-Spox-Wi..> 10-Mar-2014 16:16 1.7M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Pompes-Charg..> 24-Apr-2014 20:23 3.3M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Ponts-RLC-po..> 14-Jun-2014 18:23 3.3M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Portable-Ana..> 29-Mar-2014 11:16 2.8M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Premier-Farn..> 21-Mar-2014 08:11 3.8M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Produit-3430..> 14-Jun-2014 09:48 2.5M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Proskit-SS-3..> 10-Mar-2014 16:26 1.8M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Puissance-ut..> 11-Mar-2014 07:49 2.4M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Q48-PDF.htm 23-Jun-2014 10:29 2.1M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Radial-Lead-..> 20-Mar-2014 08:12 2.6M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Realiser-un-..> 11-Mar-2014 07:51 2.3M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Reglement-RE..> 21-Mar-2014 08:08 3.9M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Repartiteurs..> 14-Jun-2014 18:26 2.5M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-S-TRI-SWT860..> 21-Mar-2014 08:11 3.8M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-SB175-Connec..> 11-Mar-2014 08:14 2.8M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-SMBJ-Transil..> 29-Mar-2014 11:12 3.3M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-SOT-23-Multi..> 11-Mar-2014 07:51 2.3M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-SPLC780A1-16..> 14-Jun-2014 18:25 2.5M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-SSC7102-Micr..> 23-Jun-2014 10:25 3.2M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-SVPE-series-..> 14-Jun-2014 18:15 2.0M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Sensorless-C..> 04-Jul-2014 10:42 3.3M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Septembre-20..> 20-Mar-2014 17:46 3.7M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Serie-PicoSc..> 19-Mar-2014 18:01 2.5M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Serie-Standa..> 14-Jun-2014 18:23 3.3M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Series-2600B..> 20-Mar-2014 17:30 3.0M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Series-TDS10..> 04-Jul-2014 10:39 4.0M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Signal-PCB-R..> 14-Jun-2014 18:11 2.1M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Strangkuhlko..> 21-Mar-2014 08:09 3.9M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Supercapacit..> 26-Mar-2014 17:57 2.7M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-TDK-Lambda-H..> 14-Jun-2014 18:21 3.3M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-TEKTRONIX-DP..> 10-Mar-2014 17:20 2.0M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Tektronix-AC..> 13-Jun-2014 18:44 1.5M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Telemetres-l..> 20-Mar-2014 17:46 3.7M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-Termometros-..> 14-Jun-2014 18:14 2.0M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-The-essentia..> 10-Mar-2014 16:27 1.7M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-U2270B-PDF.htm 14-Jun-2014 18:15 3.4M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-USB-Buccanee..> 14-Jun-2014 09:48 2.5M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-USB1T11A-PDF..> 19-Mar-2014 18:03 2.1M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-V4N-PDF.htm 14-Jun-2014 18:11 2.1M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-WetTantalum-..> 11-Mar-2014 08:14 2.8M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-XPS-AC-Octop..> 14-Jun-2014 18:11 2.1M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-XPS-MC16-XPS..> 11-Mar-2014 08:15 2.8M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-YAGEO-DATA-S..> 11-Mar-2014 08:13 2.8M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-ZigBee-ou-le..> 11-Mar-2014 07:50 2.4M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-celpac-SUL84..> 21-Mar-2014 08:11 3.8M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-china_rohs_o..> 21-Mar-2014 10:04 3.9M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-cree-Xlamp-X..> 20-Mar-2014 17:34 2.8M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-cree-Xlamp-X..> 20-Mar-2014 17:35 2.7M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-cree-Xlamp-X..> 20-Mar-2014 17:31 2.9M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-cree-Xlamp-m..> 20-Mar-2014 17:32 2.9M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-cree-Xlamp-m..> 20-Mar-2014 17:32 2.9M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-ir1150s_fr.p..> 29-Mar-2014 11:11 3.3M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-manual-bus-p..> 10-Mar-2014 16:29 1.9M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-propose-plus..> 11-Mar-2014 08:19 2.8M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-techfirst_se..> 21-Mar-2014 08:08 3.9M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-testo-205-20..> 20-Mar-2014 17:37 3.0M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-testo-470-Fo..> 20-Mar-2014 17:38 3.0M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Farnell-uC-OS-III-Br..> 10-Mar-2014 17:20 2.0M

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Sefram-7866HD.pdf-PD..> 29-Mar-2014 11:46 472K

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Sefram-CAT_ENREGISTR..> 29-Mar-2014 11:46 461K

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Sefram-CAT_MESUREURS..> 29-Mar-2014 11:46 435K

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Sefram-GUIDE_SIMPLIF..> 29-Mar-2014 11:46 481K

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Sefram-GUIDE_SIMPLIF..> 29-Mar-2014 11:46 442K

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Sefram-GUIDE_SIMPLIF..> 29-Mar-2014 11:46 422K

![[TXT]](http://www.audentia-gestion.fr/icons/text.gif)

Sefram-SP270.pdf-PDF..> 29-Mar-2014 11:46 464K

CHAPTER

15

EQUATION 15-1

Equation of the moving average filter. In

this equation, x[ ] is the input signal, y[ ] is

the output signal, and M is the number of

points used in the moving average. This

equation only uses points on one side of the

output sample being calculated.

y[i ] ’ 1

M

j M&1

j’ 0

x [ i %j ]

y [80] ’ x [80] % x [81] % x [82] % x [83] % x [84]

5

Moving Average Filters

The moving average is the most common filter in DSP, mainly because it is the easiest digital

filter to understand and use. In spite of its simplicity, the moving average filter is optimal for

a common task: reducing random noise while retaining a sharp step response. This makes it the

premier filter for time domain encoded signals. However, the moving average is the worst filter

for frequency domain encoded signals, with little ability to separate one band of frequencies from

another. Relatives of the moving average filter include the Gaussian, Blackman, and multiplepass

moving average. These have slightly better performance in the frequency domain, at the

expense of increased computation time.

Implementation by Convolution

As the name implies, the moving average filter operates by averaging a number

of points from the input signal to produce each point in the output signal. In

equation form, this is written:

Where x [ ] is the input signal, y [ ] is the output signal, and M is the number

of points in the average. For example, in a 5 point moving average filter, point

80 in the output signal is given by:

278 The Scientist and Engineer's Guide to Digital Signal Processing

y [80] ’ x [78] % x [79] % x [80] % x [81] % x [82]

5

100 'MOVING AVERAGE FILTER

110 'This program filters 5000 samples with a 101 point moving

120 'average filter, resulting in 4900 samples of filtered data.

130 '

140 DIM X[4999] 'X[ ] holds the input signal

150 DIM Y[4999] 'Y[ ] holds the output signal

160 '

170 GOSUB XXXX 'Mythical subroutine to load X[ ]

180 '

190 FOR I% = 50 TO 4949 'Loop for each point in the output signal

200 Y[I%] = 0 'Zero, so it can be used as an accumulator

210 FOR J% = -50 TO 50 'Calculate the summation

220 Y[I%] = Y[I%] + X(I%+J%]

230 NEXT J%

240 Y[I%] = Y[I%]/101 'Complete the average by dividing

250 NEXT I%

260 '

270 END

TABLE 15-1

As an alternative, the group of points from the input signal can be chosen

symmetrically around the output point:

This corresponds to changing the summation in Eq. 15-1 from: j ’ 0 to M&1 ,

to: j ’ &(M&1) /2 to (M&1) /2 . For instance, in an 11 point moving average

filter, the index, j, can run from 0 to 11 (one side averaging) or -5 to 5

(symmetrical averaging). Symmetrical averaging requires that M be an odd

number. Programming is slightly easier with the points on only one side;

however, this produces a relative shift between the input and output signals.

You should recognize that the moving average filter is a convolution using a

very simple filter kernel. For example, a 5 point filter has the filter kernel:

þ 0, 0, 1/5, 1/5, 1/5, 1/5, 1/5, 0, 0 þ . That is, the moving average filter is a

convolution of the input signal with a rectangular pulse having an area of one.

Table 15-1 shows a program to implement the moving average filter.

Noise Reduction vs. Step Response

Many scientists and engineers feel guilty about using the moving average filter.

Because it is so very simple, the moving average filter is often the first thing

tried when faced with a problem. Even if the problem is completely solved,

there is still the feeling that something more should be done. This situation is

truly ironic. Not only is the moving average filter very good for many

applications, it is optimal for a common problem, reducing random white noise

while keeping the sharpest step response.

Chapter 15- Moving Average Filters 279

Sample number

0 100 200 300 400 500

-1

0

1

2

a. Original signal

Sample number

0 100 200 300 400 500

-1

0

1

2

b. 11 point moving average

FIGURE 15-1

Example of a moving average filter. In (a), a

rectangular pulse is buried in random noise. In

(b) and (c), this signal is filtered with 11 and 51

point moving average filters, respectively. As

the number of points in the filter increases, the

noise becomes lower; however, the edges

becoming less sharp. The moving average filter

is the optimal solution for this problem,

providing the lowest noise possible for a given

edge sharpness.

Sample number

0 100 200 300 400 500

-1

0

1

2

c. 51 point moving average

Amplitude Amplitude

Amplitude

Figure 15-1 shows an example of how this works. The signal in (a) is a pulse

buried in random noise. In (b) and (c), the smoothing action of the moving

average filter decreases the amplitude of the random noise (good), but also

reduces the sharpness of the edges (bad). Of all the possible linear filters that

could be used, the moving average produces the lowest noise for a given edge

sharpness. The amount of noise reduction is equal to the square-root of the

number of points in the average. For example, a 100 point moving average

filter reduces the noise by a factor of 10.

To understand why the moving average if the best solution, imagine we want

to design a filter with a fixed edge sharpness. For example, let's assume we fix

the edge sharpness by specifying that there are eleven points in the rise of the

step response. This requires that the filter kernel have eleven points. The

optimization question is: how do we choose the eleven values in the filter

kernel to minimize the noise on the output signal? Since the noise we are

trying to reduce is random, none of the input points is special; each is just as

noisy as its neighbor. Therefore, it is useless to give preferential treatment to

any one of the input points by assigning it a larger coefficient in the filter

kernel. The lowest noise is obtained when all the input samples are treated

equally, i.e., the moving average filter. (Later in this chapter we show that

other filters are essentially as good. The point is, no filter is better than the

simple moving average).

280 The Scientist and Engineer's Guide to Digital Signal Processing

EQUATION 15-2

Frequency response of an M point moving

average filter. The frequency, f, runs between

0 and 0.5. For f ’ 0, use: H[ f ] ’ 1

H [ f ] ’ sin(Bf M )

M sin(Bf )

Frequency

0 0.1 0.2 0.3 0.4 0.5

0.0

0.2

0.4

0.6

0.8

1.0

1.2

3 point

11 point

31 point

FIGURE 15-2

Frequency response of the moving average

filter. The moving average is a very poor

low-pass filter, due to its slow roll-off and

poor stopband attenuation. These curves are

generated by Eq. 15-2.

Amplitude

Frequency Response

Figure 15-2 shows the frequency response of the moving average filter. It is

mathematically described by the Fourier transform of the rectangular pulse, as

discussed in Chapter 11:

The roll-off is very slow and the stopband attenuation is ghastly. Clearly, the

moving average filter cannot separate one band of frequencies from another.

Remember, good performance in the time domain results in poor performance

in the frequency domain, and vice versa. In short, the moving average is an

exceptionally good smoothing filter (the action in the time domain), but an

exceptionally bad low-pass filter (the action in the frequency domain).

Relatives of the Moving Average Filter

In a perfect world, filter designers would only have to deal with time

domain or frequency domain encoded information, but never a mixture of

the two in the same signal. Unfortunately, there are some applications

where both domains are simultaneously important. For instance, television

signals fall into this nasty category. Video information is encoded in the

time domain, that is, the shape of the waveform corresponds to the patterns

of brightness in the image. However, during transmission the video signal

is treated according to its frequency composition, such as its total

bandwidth, how the carrier waves for sound & color are added, elimination

& restoration of the DC component, etc. As another example, electromagnetic

interference is best understood in the frequency domain, even if

Chapter 15- Moving Average Filters 281

Sample number

0 6 12 18 24

0.0

0.1

0.2

2 pass

4 pass

1 pass

a. Filter kernel

Sample number

0 6 12 18 24

0.0

0.2

0.4

0.6

0.8

1.0

1.2

1 pass

4 pass

2 pass

b. Step response

Frequency

0 0.1 0.2 0.3 0.4 0.5

-120

-100

-80

-60

-40

-20

0

20

40

1 pass

2 pass

4 pass

d. Frequency response (dB)

FIGURE 15-3

Characteristics of multiple-pass moving average filters. Figure (a) shows the filter kernels resulting from

passing a seven point moving average filter over the data once, twice and four times. Figure (b) shows the

corresponding step responses, while (c) and (d) show the corresponding frequency responses.

FFT

Integrate 20 Log( )

Amplitude Amplitude

Frequency

0 0.1 0.2 0.3 0.4 0.5

0.00

0.25

0.50

0.75

1.00

1.25

1 pass

2 pass

4 pass

c. Frequency response

Amplitude (dB) Amplitude

the signal's information is encoded in the time domain. For instance, the

temperature monitor in a scientific experiment might be contaminated with 60

hertz from the power lines, 30 kHz from a switching power supply, or 1320

kHz from a local AM radio station. Relatives of the moving average filter

have better frequency domain performance, and can be useful in these mixed

domain applications.

Multiple-pass moving average filters involve passing the input signal

through a moving average filter two or more times. Figure 15-3a shows the

overall filter kernel resulting from one, two and four passes. Two passes are

equivalent to using a triangular filter kernel (a rectangular filter kernel

convolved with itself). After four or more passes, the equivalent filter kernel

looks like a Gaussian (recall the Central Limit Theorem). As shown in (b),

multiple passes produce an "s" shaped step response, as compared to the

straight line of the single pass. The frequency responses in (c) and (d) are

given by Eq. 15-2 multiplied by itself for each pass. That is, each time domain

convolution results in a multiplication of the frequency spectra.

282 The Scientist and Engineer's Guide to Digital Signal Processing

Figure 15-4 shows the frequency response of two other relatives of the moving

average filter. When a pure Gaussian is used as a filter kernel, the frequency

response is also a Gaussian, as discussed in Chapter 11. The Gaussian is

important because it is the impulse response of many natural and manmade

systems. For example, a brief pulse of light entering a long fiber optic

transmission line will exit as a Gaussian pulse, due to the different paths taken

by the photons within the fiber. The Gaussian filter kernel is also used

extensively in image processing because it has unique properties that allow

fast two-dimensional convolutions (see Chapter 24). The second frequency

response in Fig. 15-4 corresponds to using a Blackman window as a filter

kernel. (The term window has no meaning here; it is simply part of the

accepted name of this curve). The exact shape of the Blackman window is

given in Chapter 16 (Eq. 16-2, Fig. 16-2); however, it looks much like a

Gaussian.

How are these relatives of the moving average filter better than the moving

average filter itself? Three ways: First, and most important, these filters have

better stopband attenuation than the moving average filter. Second, the filter

kernels taper to a smaller amplitude near the ends. Recall that each point in

the output signal is a weighted sum of a group of samples from the input. If the

filter kernel tapers, samples in the input signal that are farther away are given

less weight than those close by. Third, the step responses are smooth curves,

rather than the abrupt straight line of the moving average. These last two are

usually of limited benefit, although you might find applications where they are

genuine advantages.

The moving average filter and its relatives are all about the same at reducing

random noise while maintaining a sharp step response. The ambiguity lies in

how the risetime of the step response is measured. If the risetime is measured

from 0% to 100% of the step, the moving average filter is the best you can do,

as previously shown. In comparison, measuring the risetime from 10% to 90%

makes the Blackman window better than the moving average filter. The point

is, this is just theoretical squabbling; consider these filters equal in this

parameter.

The biggest difference in these filters is execution speed. Using a recursive

algorithm (described next), the moving average filter will run like lightning in

your computer. In fact, it is the fastest digital filter available. Multiple passes

of the moving average will be correspondingly slower, but still very quick. In

comparison, the Gaussian and Blackman filters are excruciatingly slow,

because they must use convolution. Think a factor of ten times the number of

points in the filter kernel (based on multiplication being about 10 times slower

than addition). For example, expect a 100 point Gaussian to be 1000 times

slower than a moving average using recursion.

Recursive Implementation

A tremendous advantage of the moving average filter is that it can be

implemented with an algorithm that is very fast. To understand this

Chapter 15- Moving Average Filters 283

FIGURE 15-4

Frequency response of the Blackman window

and Gaussian filter kernels. Both these filters

provide better stopband attenuation than the

moving average filter. This has no advantage in

removing random noise from time domain

encoded signals, but it can be useful in mixed

domain problems. The disadvantage of these

filters is that they must use convolution, a

terribly slow algorithm.

Frequency

0 0.1 0.2 0.3 0.4 0.5

-140

-120

-100

-80

-60

-40

-20

0

20

Gaussian

Blackman

Amplitude (dB)

y [50] ’ x [47] % x [48] % x [49] % x [50] % x [51] % x [52] % x [53]

y [51] ’ x [48] % x [49] % x [50] % x [51] % x [52] % x [53] % x [54]

y [51] ’ y [50] % x [54] & x [47]

EQUATION 15-3

Recursive implementation of the moving

average filter. In this equation, x[ ] is the

input signal, y[ ] is the output signal, M is the

number of points in the moving average (an

odd number). Before this equation can be

used, the first point in the signal must be

calculated using a standard summation.

y [i ] ’ y [i &1] % x [i %p] & x [i &q]

q ’ p % 1

where: p ’ (M&1) /2

algorithm, imagine passing an input signal, x [ ], through a seven point moving

average filter to form an output signal, y [ ]. Now look at how two adjacent

output points, y [50] and y [51], are calculated:

These are nearly the same calculation; points x [48] through x [53] must be

added for y [50], and again for y [51]. If y [50] has already been calculated, the

most efficient way to calculate y [51] is:

Once y [51] has been found using y [50], then y [52] can be calculated from

sample y [51], and so on. After the first point is calculated in y [ ], all of the

other points can be found with only a single addition and subtraction per point.

This can be expressed in the equation:

Notice that this equation use two sources of data to calculate each point in the

output: points from the input and previously calculated points from the output.

This is called a recursive equation, meaning that the result of one calculation

284 The Scientist and Engineer's Guide to Digital Signal Processing

100 'MOVING AVERAGE FILTER IMPLEMENTED BY RECURSION

110 'This program filters 5000 samples with a 101 point moving

120 'average filter, resulting in 4900 samples of filtered data.

130 'A double precision accumulator is used to prevent round-off drift.

140 '

150 DIM X[4999] 'X[ ] holds the input signal

160 DIM Y[4999] 'Y[ ] holds the output signal

170 DEFDBL ACC 'Define the variable ACC to be double precision

180 '

190 GOSUB XXXX 'Mythical subroutine to load X[ ]

200 '

210 ACC = 0 'Find Y[50] by averaging points X[0] to X[100]

220 FOR I% = 0 TO 100

230 ACC = ACC + X[I%]

240 NEXT I%

250 Y[[50] = ACC/101

260 ' 'Recursive moving average filter (Eq. 15-3)

270 FOR I% = 51 TO 4949

280 ACC = ACC + X[I%+50] - X[I%-51]

290 Y[I%] = ACC

300 NEXT I%

310 '

320 END

TABLE 15-2

CHAPTER

6 Convolution

Convolution is a mathematical way of combining two signals to form a third signal. It is the

single most important technique in Digital Signal Processing. Using the strategy of impulse

decomposition, systems are described by a signal called the impulse response. Convolution is

important because it relates the three signals of interest: the input signal, the output signal, and

the impulse response. This chapter presents convolution from two different viewpoints, called

the input side algorithm and the output side algorithm. Convolution provides the mathematical

framework for DSP; there is nothing more important in this book.

The Delta Function and Impulse Response

The previous chapter describes how a signal can be decomposed into a group

of components called impulses. An impulse is a signal composed of all zeros,

except a single nonzero point. In effect, impulse decomposition provides a way

to analyze signals one sample at a time. The previous chapter also presented

the fundamental concept of DSP: the input signal is decomposed into simple

additive components, each of these components is passed through a linear

system, and the resulting output components are synthesized (added). The

signal resulting from this divide-and-conquer procedure is identical to that

obtained by directly passing the original signal through the system. While

many different decompositions are possible, two form the backbone of signal

processing: impulse decomposition and Fourier decomposition. When impulse

decomposition is used, the procedure can be described by a mathematical

operation called convolution. In this chapter (and most of the following ones)

we will only be dealing with discrete signals. Convolution also applies to

continuous signals, but the mathematics is more complicated. We will look at

how continious signals are processed in Chapter 13.

Figure 6-1 defines two important terms used in DSP. The first is the delta

function, symbolized by the Greek letter delta, *[n]. The delta function is

a normalized impulse, that is, sample number zero has a value of one, while

108 The Scientist and Engineer's Guide to Digital Signal Processing

all other samples have a value of zero. For this reason, the delta function is

frequently called the unit impulse.

The second term defined in Fig. 6-1 is the impulse response. As the name

suggests, the impulse response is the signal that exits a system when a delta

function (unit impulse) is the input. If two systems are different in any way,

they will have different impulse responses. Just as the input and output signals

are often called x[n] and y[n] , the impulse response is usually given the

symbol, h[n]. Of course, this can be changed if a more descriptive name is

available, for instance, f [n] might be used to identify the impulse response of

a filter.

Any impulse can be represented as a shifted and scaled delta function.

Consider a signal, a[n] , composed of all zeros except sample number 8,

which has a value of -3. This is the same as a delta function shifted to the

right by 8 samples, and multiplied by -3. In equation form:

a[n] ’ &3*[n&8]. Make sure you understand this notation, it is used in

nearly all DSP equations.

If the input to a system is an impulse, such as &3*[n&8] , what is the system's

output? This is where the properties of homogeneity and shift invariance are

used. Scaling and shifting the input results in an identical scaling and shifting

of the output. If *[n] results in h[n] , it follows that &3*[n&8] results in

&3h[n&8] . In words, the output is a version of the impulse response that has

been shifted and scaled by the same amount as the delta function on the input.

If you know a system's impulse response, you immediately know how it will

react to any impulse.

Convolution

Let's summarize this way of understanding how a system changes an input

signal into an output signal. First, the input signal can be decomposed into a

set of impulses, each of which can be viewed as a scaled and shifted delta

function. Second, the output resulting from each impulse is a scaled and shifted

version of the impulse response. Third, the overall output signal can be found

by adding these scaled and shifted impulse responses. In other words, if we

know a system's impulse response, then we can calculate what the output will

be for any possible input signal. This means we know everything about the

system. There is nothing more that can be learned about a linear system's

characteristics. (However, in later chapters we will show that this information

can be represented in different forms).

The impulse response goes by a different name in some applications. If the

system being considered is a filter, the impulse response is called the filter

kernel, the convolution kernel, or simply, the kernel. In image processing,

the impulse response is called the point spread function. While these terms

are used in slightly different ways, they all mean the same thing, the signal

produced by a system when the input is a delta function.

Chapter 6- Convolution 109

System

-2 -1 0 1 2 3 4 5 6

-1

0

1

2

-2 -1 0 1 2 3 4 5 6

-1

0

1

2

*[n] h[n]

Delta Impulse

Response

Linear

Function

FIGURE 6-1

Definition of delta function and impulse response. The delta function is a normalized impulse. All of

its samples have a value of zero, except for sample number zero, which has a value of one. The Greek

letter delta, *[n] , is used to identify the delta function. The impulse response of a linear system, usually

denoted by h[n] , is the output of the system when the input is a delta function.

x[n] h[n] = y[n]

x[n] y[n]

Linear

System

h[n]

FIGURE 6-2

How convolution is used in DSP. The

output signal from a linear system is

equal to the input signal convolved

with the system's impulse response.

Convolution is denoted by a star when

writing equations.

Convolution is a formal mathematical operation, just as multiplication,

addition, and integration. Addition takes two numbers and produces a third

number, while convolution takes two signals and produces a third signal.

Convolution is used in the mathematics of many fields, such as probability and

statistics. In linear systems, convolution is used to describe the relationship

between three signals of interest: the input signal, the impulse response, and the

output signal.

Figure 6-2 shows the notation when convolution is used with linear systems.

An input signal, x[n] , enters a linear system with an impulse response, h[n] ,

resulting in an output signal, y[n] . In equation form: x[n] t h[n] ’ y[n] .

Expressed in words, the input signal convolved with the impulse response is

equal to the output signal. Just as addition is represented by the plus, +, and

multiplication by the cross, ×, convolution is represented by the star, t. It is

unfortunate that most programming languages also use the star to indicate

multiplication. A star in a computer program means multiplication, while a star

in an equation means convolution.

110 The Scientist and Engineer's Guide to Digital Signal Processing

Sample number

0 10 20 30 40 50 60 70 80 90 100 110

-2

-1

0

1

2

3

4

S

0 10 20 30

-0.25

0.00

0.25

0.50

0.75

1.00

1.25

S

0 10 20 30

-0.02

0.00

0.02

0.04

0.06

0.08

a. Low-pass Filter

b. High-pass Filter

Sample number

0 10 20 30 40 50 60 70 80

-2

-1

0

1

2

3

4

Sample number

0 10 20 30 40 50 60 70 80 90 100 110

-2

-1

0

1

2

3

4

Sample number

0 10 20 30 40 50 60 70 80

-2

-1

0

1

2

3

4

Sample number

Sample number

Input Signal Impulse Response Output Signal

Amplitude Amplitude

Amplitude Amplitude

Amplitude Amplitude

FIGURE 6-3

Examples of low-pass and high-pass filtering using convolution. In this example, the input signal

is a few cycles of a sine wave plus a slowly rising ramp. These two components are separated by